EdTech Testbeds

Developing models for a new testbed to improve evidence in EdTech

When it comes to educational technology, evidence can be hard to find. Working with Nesta and Prof. Mike Sharples, we proposed four models to build evidence for informing EdTech decisions.

Clients

- Nesta

Domains

- Education

- Evidence

- Technology

Services

- Actionable research

- Workshop design and facilitation

- Expert consultation

How can we improve EdTech evidence?

One of the core aims of Nesta’s Education team is to ‘empower learners, teachers and learning institutions to make more effective use of technology and data’. Through their work in this area, the team realised that something critical was missing from the educational technology (EdTech) ecosystem: evidence. A lack of good evidence means that it can be hard for schools to choose the right EdTech for their needs, and for EdTech suppliers to test and improve their products to better serve students and teachers – leading to cycles of hype and disappointment for EdTech users and creators alike.

To address this problem, Nesta began exploring the idea of developing a testbed in the UK to improve the state of evidence, and started building a partnership with the Department for Education to set one up. But first, they needed a better understanding of what such a testbed could look like.

To support the design of this testbed, we worked with Prof. Mike Sharples – an expert in the EdTech field – to develop four models for building evidence around EdTech. The purpose of these models was to give testbed designers a set of high-level options to choose between or combine, and to help them understand how best to implement each type of testbed. This would give them a good starting point for specifying the design of the real testbed.

Our work culminated in the publication of a report Nesta has been using to guide the design of their EdTech Innovation Testbed in collaboration with the Department for Education.

Our report EdTech testbeds: models for improving evidence suggests four models for an EdTech testbed in the UK.

Report chapters

- The challenge: make more effective use of technology in education

- Learning from case studies of existing testbeds

- Testbed models

- Comparison and analysis of models

- Considerations for implementing the models

Understanding the EdTech evidence system

Before proposing models for testbeds, we wanted to understand why the EdTech system wasn’t producing good evidence. The problem is complex – there are many different actors involved in EdTech, each with their own challenges around evidence. Therefore, as well as reviewing the literature, we talked to teachers, EdTech suppliers, researchers, policymakers, and school administrators. We used what we learned from this to list key barriers preventing good evidence.

We also wanted to understand how each actor could be part of a solution. We found that everyone in EdTech can both help generate evidence and use evidence. This helped us see how different actors could play different roles in a testbed. These insights then informed the models we specified.

| Role | Generates evidence by | Uses evidence for |

|---|---|---|

| Researcher | Drawing on the literature, running studies, giving professional judgement | Developing theories, inputting into EdTech design |

| EdTech supplier | Providing data and software infrastructure, running studies | Designing products, testing and improving products, proving products work |

| Teacher | Participating in studies, running studies, giving professional judgement | Choosing EdTech, using EdTech effectively |

| School leader or administrator | Developing data-gathering systems, running studies, giving professional judgement | Choosing EdTech, implementing EdTech effectively |

| Policymaker | Enabling any of the other roles to generate evidence, e.g. by providing funding or commissioning research | Guiding policy |

As well as understanding the EdTech system, we studied existing solutions. We made sure to understand the landscape of organisations working on the problem in the UK, and to see where gaps were in this landscape. We also wrote case studies on five testbeds in other countries. This gave us specific examples of how a testbed could work and helped us see the variety of possible testbed designs. We also held a workshop with teachers, policymakers, and EdTech developers to build additional testbed models that integrated their experiences.

Developing models for EdTech testbeds

We drew together all that we had learned to develop four testbed models:

| Model | Description | |

|---|---|---|

|

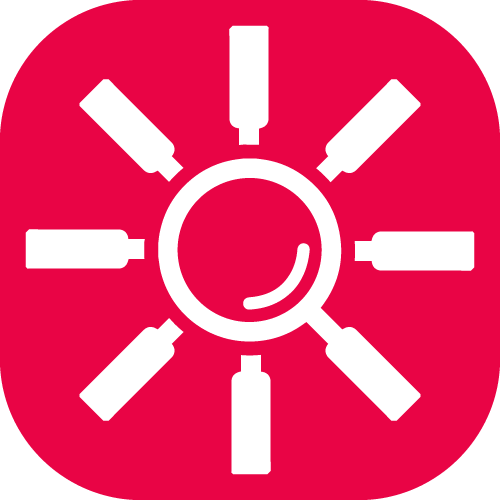

Co-design | EdTech suppliers, researchers, teachers, and students work together to identify educational needs and opportunities, and to develop a combination of technology and pedagogy to address these. |

|

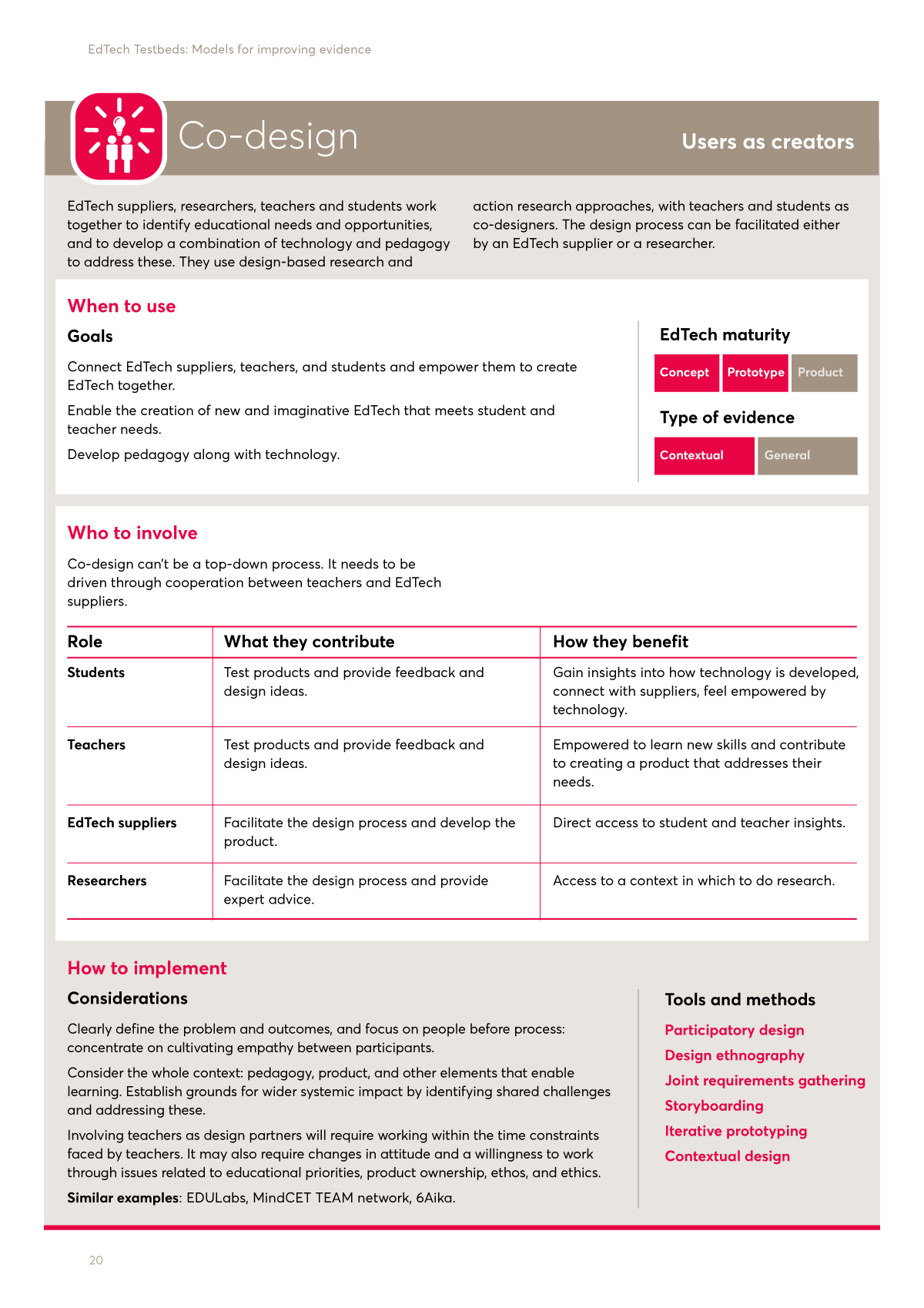

Test and learn | EdTech suppliers work with schools to rapidly test their product in a school setting so they can improve it. |

|

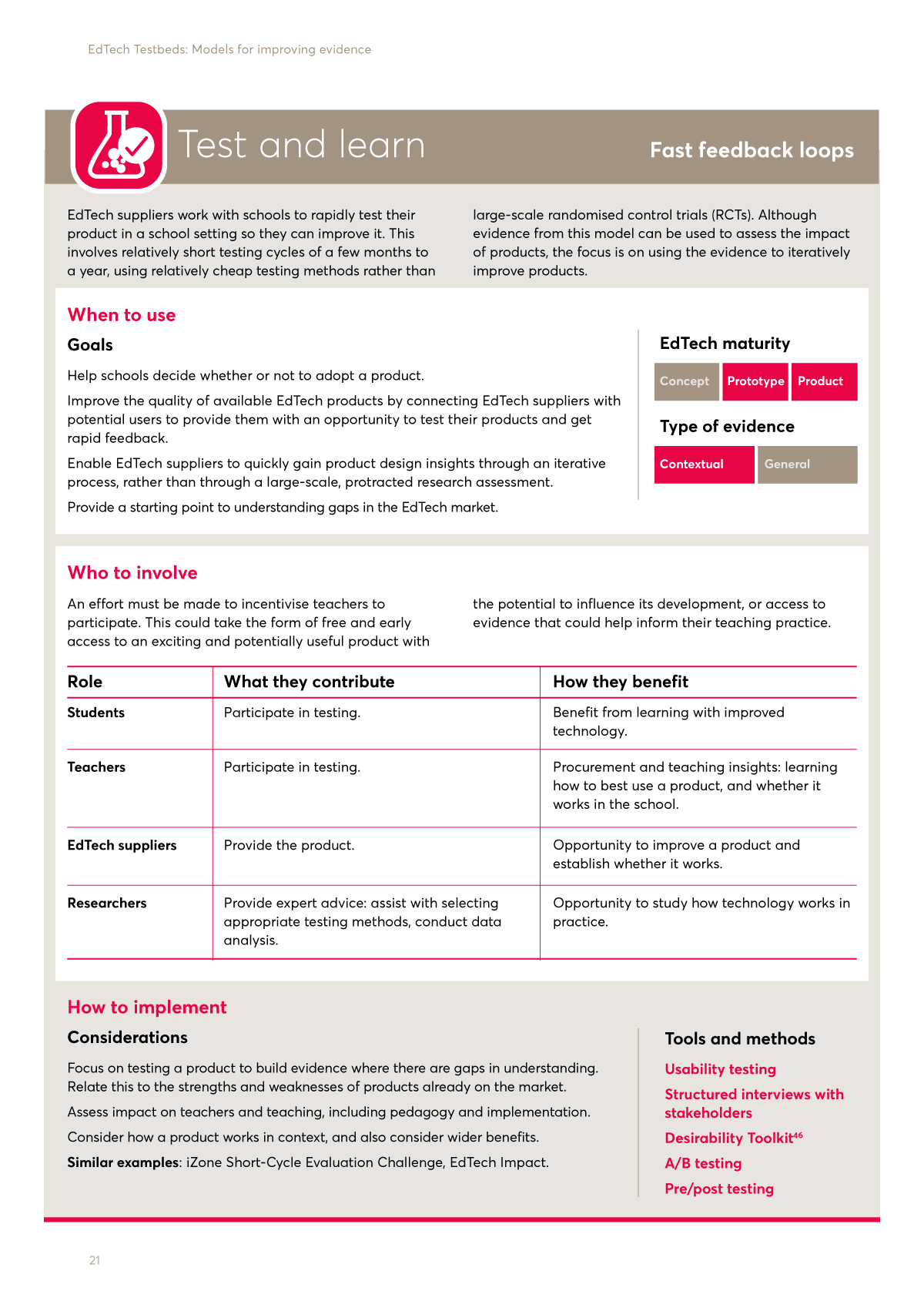

Evidence hub | A space for schools and policymakers to work with EdTech developers and researchers to generate evidence about impact, synthesise it, and disseminate evidence-based advice to guide adoption and scaling. |

|

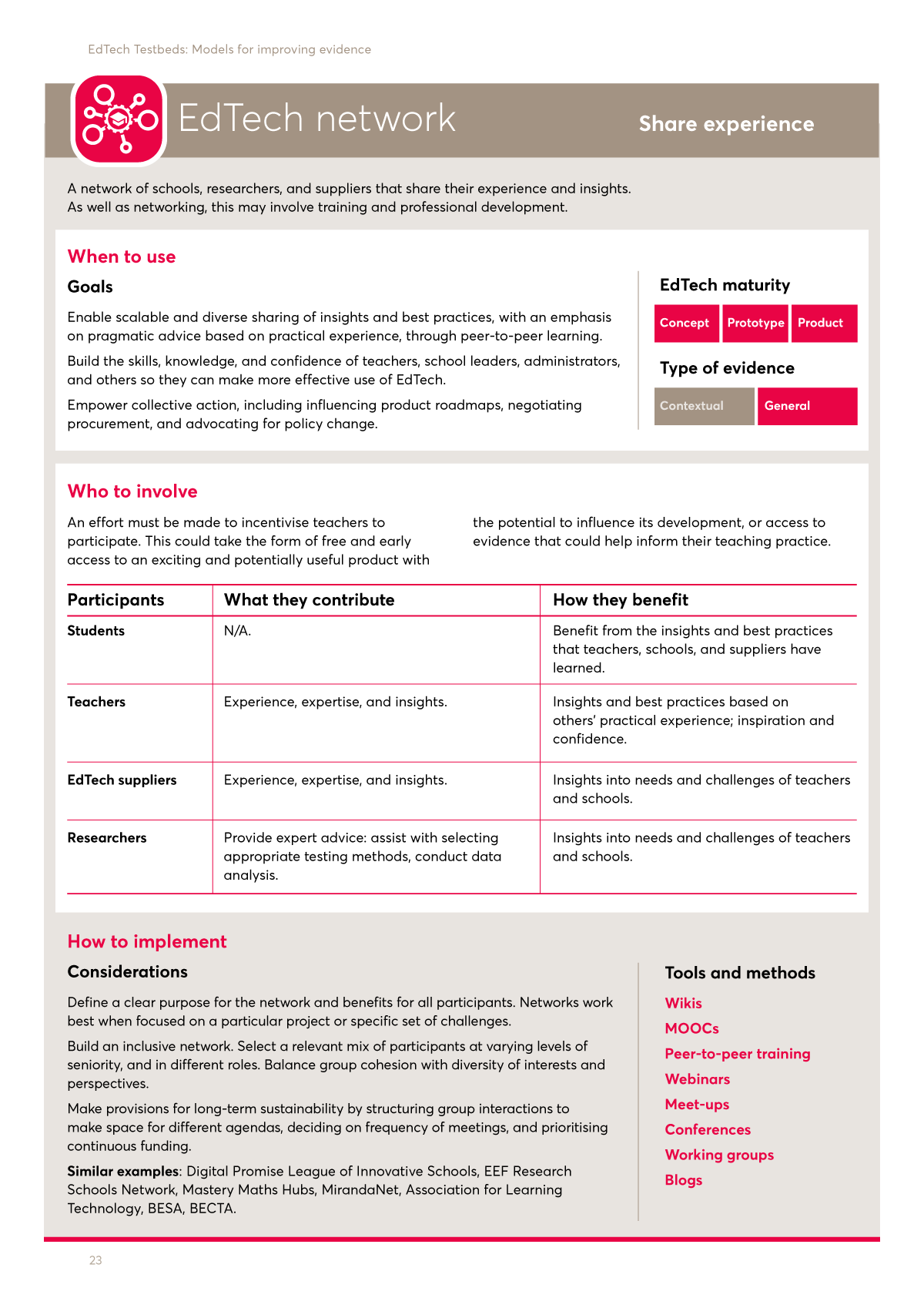

EdTech network | A network of schools, researchers, and suppliers that share their experience and insights. As well as networking, this may involve training and professional development. |

For each model, we wanted to address three main questions: When should you use this model? Who should you involve and how? And finally, How should you implement it? We profiled each on a single, easy-to-reference page to enable people to share and compare the set.

We wanted to make sure the four models covered the space of possibilities for a testbed. To help us do this, we thought about which were the most important variables on which testbeds could vary and checked that our models covered the full range of these variables. For example, the maturity of the EdTech product tested is an important variable, and our models covered all levels of maturity from concept, to prototype, to product.

The Co-design model.

The Test and learn model.

The Evidence hub model.

The EdTech network model.

Informing the design of EdTech testbeds

These models are already contributing to the design of real-life EdTech testbeds. In April 2019, Nesta announced a £4.6 million funding programme in partnership with the Department for Education to evaluate EdTech products by drawing on some of the learnings from our report.

This work is part of a broader interest of ours: How do you build infrastructure that helps people generate and use evidence? Many fields struggle with this, and their impact is limited as a result. There are often calls for better evidence, but without good infrastructure to support this, making progress can be tough.

Does this sound like a familiar challenge? If so, get in touch – we'd love to hear about it.